Do you want your users to upload large files? .. I mean real large files, like multiple GB video .. Do your users live in places with a bad or unstable connection? .. If your answer is “yes”, then this article is exactly what you are looking for.

Background

At Chaino Social Network, we do care about our users, their feedback is our daily fuel, and a smooth enhanced UX is what we seek in everything we do for them. They asked for a video uploads feature, we made it for them .. they asked for a smaller processing time, we made it too .. they asked to upload a larger video files (up to 1 GB), again we made our precautions and increased the size to 1 GB .. but then we felt like hitting a wall, when we were swarmed by users’ feedback complaining that video uploads is easily interrupted by the bad networking, and the problem gets worse and worse when the files gets bigger and bigger where it becomes more vulnerable to interruptions and failures .. that is when we started our hunt for a solution. but let’s first see the current way of uploading.

Problem with normal way of Uploading

Our normal way of uploading is basically using the change event to start validating the file then uploading it as a multipart request where nginx does all the work for us then delivers the file path to our php backend where we can start processing the video, like the following:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| $('#uploadBtn').change(function () { | |

| var file = this.files[0]; | |

| if (typeof file === "undefined") { | |

| return; | |

| } | |

| // some validations goes here using `file.size` & `file.type` | |

| var myFormData = new FormData(); | |

| myFormData.append('videoFile', this.files[0]); // `videoFile` is the file name expected at the backend | |

| $.ajax({ | |

| url: '/file/post', | |

| type: 'POST', | |

| processData: false, | |

| contentType: false, | |

| dataType: 'json', | |

| data: myFormData, | |

| xhr: function () { // Custom XMLHttpRequest | |

| var myXhr = $.ajaxSettings.xhr(); | |

| if (myXhr.upload) { // Check if upload property exists | |

| myXhr.upload.addEventListener('progress', handleProgressBar, false); // For handling the progress of the upload | |

| } | |

| return myXhr; | |

| }, | |

| success: function(){ | |

| // Upload has been completed | |

| } | |

| }); | |

| }); | |

| function handleProgressBar(e) { | |

| if (e.lengthComputable) { | |

| // reflect values on your progress bar using `e.total` & `e.loaded` | |

| } | |

| } |

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| <?php | |

| class FileController { | |

| public function postAction() { | |

| // Disable views/layout the way suites your framework | |

| if (!isset($_FILES['imageFile'])) { | |

| // Throw an exception or handle it your way | |

| } | |

| // Nginx already did all the work for us, & received the file in the `/tmp` folder | |

| $uploadedFile = $_FILES['imageFile']; | |

| $OriginalFileName = $uploadedFile['name']; | |

| $size = $uploadedFile['size']; | |

| $completeFilePath = $uploadedFile['tmp_name']; | |

| // Do some validations on file's type & file's size (using `$size` & `filesize($filePath)` | |

| // & if all is fine, then start processing your file here, & probably return a success message | |

| } | |

| } |

As you can see, both client & server sides expect the file to be sent in one shot no matter how big it is, but in the real world, networks get interrupted all the time, which forces the user to upload the file over & over again from the beginning! , which is super frustrating with large files like videos!

In our hunt for a solution, At first we found some honorable mentions like Resumable.js, they didn’t really introduce a complete solution because most of these solutions focus only on the client side, where the server side is actually the real challenge! but then we found the only real complete solution out there Tus.io which was beyond our dreams!

Solution .. The Awesome One

Now we need a solution who can make 2 things:

- Protect our users from network interruptions, where the solution should be able to automatically retry sending the file until network is hopefully stable again.

- Gives our users a resumable upload in case of total network failures; so after the network comes back, users can continue uploading the file again where they left off.

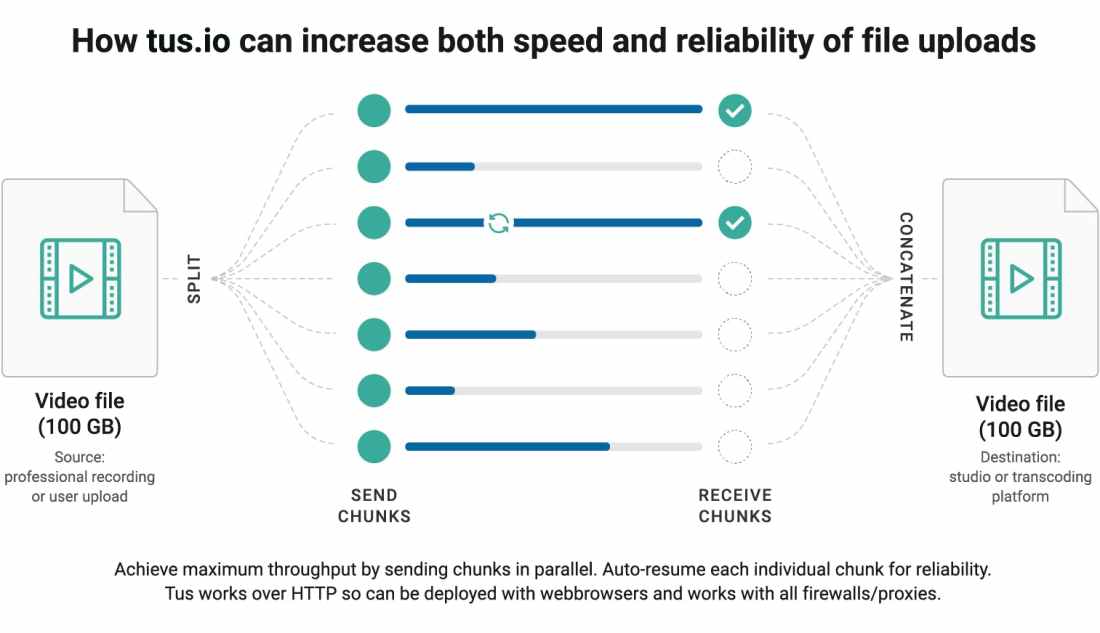

I know these requirements seem like a dream, but this is why Tus.io is so awesome in so many levels, simply this is how it works:

(Illustration by Alexander Zaytsev)

Tus is being adopted & trusted by many products now like Vimeo.

Now, if we are going to cook this awesome meal, let’s first list our ingredients:

- TusPHP for server side

- We used php library, but actually you can use other server side implementations like node.js, Go or others

- tus-js-client for client side

- TusPHP already has a php client, but I think we all would agree to use the javascript implementations for the client over the php ones.

Getting Started

Let’s install TusPHP (for server-side) using composer:

composer require ankitpokhrel/tus-php

and installing tus-js-client (for client-side) using npm:

npm install tus-js-client

& here are the basic usage for them:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| var tus = require("tus-js-client"); | |

| $('#uploadBtn').change(function () { | |

| var file = this.files[0]; | |

| if (typeof file === "undefined") { | |

| return; | |

| } | |

| // some validations goes here using `file.size` & `file.type` | |

| var upload = new tus.Upload(file, { | |

| // https://github.com/tus/tus-js-client#tusdefaultoptions | |

| endpoint: "/tus", | |

| retryDelays: [0, 1000, 3000, 5000, 10000], | |

| metadata: { | |

| filename: file.name, | |

| filetype: file.type | |

| }, | |

| onError: function (error) { | |

| // Handle errors here | |

| }, | |

| onProgress: function (bytesUploaded, bytesTotal) { | |

| // Reflect values on your progress bar using `bytesTotal` & `bytesUploaded` | |

| }, | |

| onSuccess: function () { | |

| // Upload has been completed | |

| } | |

| }); | |

| // Start the upload | |

| upload.start(); | |

| }); |

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| <?php | |

| class TusController { | |

| public function indexAction() { | |

| // Disable views/layout the way suites your framework | |

| $server = new TusPhp\Tus\Server(); // Using File Cache (over Redis) for simpler setup | |

| $server->setApiPath('/tus/index') // tus server endpoint. | |

| ->setUploadDir('/tmp'); // uploads dir. | |

| $response = $server->serve(); | |

| $response->send(); | |

| } | |

| } |

I tried this combination locally & everything worked like a charm. But then we deployed the solution to our Beta servers, and this is when the panic begins🙈.

Production Shocks

I know we deployed on Beta servers only, but let’s face it, most of us expect Beta to be like 5 minutes away from deploying on production .. which wasn’t our case 🙂. So, these are the problems we faced after using a real production-like environment:

Permission Denied for File Cache

Remember we used File Cache before for simplicity, well, the library expects you to pass a configuration for the cache file’s path or it will just make cache file inside the library’s folder in the vendor folder which gives us a Permission Denied error for trying to write to the vendor folder without the right permissions, so let’s just pass the right configuration with a path accessible by all server’s users like the /tmp folder (no need for keeping a long term cache, after all the cache won’t exceed 24 hours per file by design), and here is how you can do so:

TusPhp\Config::set([

/**

* File cache configs.

*

* Adding the cache in the '/tmp/' because it is the only place writable by

* all users on the production server.

*/

'file' => [

'dir' => '/tmp/',

'name' => 'tus_php.cache',

],

]);

HTTPS at the load-balancer

Locally I’m using a self-signed certificate, so all the traffic reaching the backend is totally Https, but on the production, the ssl is at the load balancer level, which redirects the traffic to our servers as Http only, which tricked Tus into believing that the video url is in Http only, which breaks the uploading, so I had to fix the response headers to add the Https back again:

// in the file controller before sending the response

// get/set headers the way that suits your framework

$location = $response->headers->get('location');

if (!empty($location)) {// `location` is sent to the client only the 1st time

$location = preg_replace("/^http:/i", "https:", $location);

$response->headers->set('location', $location);

}

PATCH is not supported

Yet, still not working, it turns out that our production environment setup doesn’t allow PATCH requests, and this is where tus-js-client came to the rescue with its option overridePatchMethod: true which depends on usual POST requests instead.

Re-Uploading starts from 0% !!

Now, everything works fine. On my local machine uploads was lightening fast, so I couldn’t actually test the resumability part of our solution, so let’s try it on the beta, let’s cancel the upload at 40% and try to re-upload it again .. Oh Ooh, it started from 0%, What the heck just happened!

After digging a lot in my server part (which was my suspect), it turns out that tus-js-client has an option called chunkSize with a default value of Infinity, which means upload the whole file at once 🙈 !!, so I just fixed it with specifying a chunk size of 1MB chunkSize: 1000 * 1000

Wrapping up the whole solution

After putting it all together, here is our final version:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| var tus = require("tus-js-client"); | |

| $('#uploadBtn').change(function () { | |

| var file = this.files[0]; | |

| if (typeof file === "undefined") { | |

| return; | |

| } | |

| // some validations goes here using `file.size` & `file.type` | |

| var upload = new tus.Upload(file, { | |

| // https://github.com/tus/tus-js-client#tusdefaultoptions | |

| endpoint: "/tus", | |

| retryDelays: [0, 1000, 3000, 5000, 10000], | |

| overridePatchMethod: true, // Because production-servers-setup doesn't support PATCH http requests | |

| chunkSize: 1000 * 1000, // Bytes | |

| metadata: { | |

| filename: file.name, | |

| filetype: file.type | |

| }, | |

| onError: function (error) { | |

| // Handle errors here | |

| }, | |

| onProgress: function (bytesUploaded, bytesTotal) { | |

| // Reflect values on your progress bar using `bytesTotal` & `bytesUploaded` | |

| }, | |

| onSuccess: function () { | |

| // Upload has been completed | |

| } | |

| }); | |

| // Start the upload | |

| upload.start(); | |

| }); |

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| <?php | |

| class TusController { | |

| public function indexAction() { | |

| // Disable views/layout the way suites your framework | |

| $server = $this->_getTusServer(); | |

| $response = $server->serve(); | |

| $this->_fixNonSecureLocationHeader($response); | |

| $response->send(); | |

| } | |

| private function _getTusServer() { | |

| TusPhp\Config::set([ | |

| /** | |

| * File cache configs. | |

| * | |

| * Adding the cache in the '/tmp/' because it is the only place writable by | |

| * all users on the production server. | |

| */ | |

| 'file' => [ | |

| 'dir' => '/tmp/', | |

| 'name' => 'tus_php.cache', | |

| ], | |

| ]); | |

| $server = new TusPhp\Tus\Server(); // Using File Cache (over Redis) for simpler setup | |

| $server->setApiPath('/tus/index') // tus server endpoint. | |

| ->setUploadDir('/tmp'); // uploads dir. | |

| return $server; | |

| } | |

| /** | |

| * The `location` header is where the client js library will upload the file through, | |

| * But, the load-balancer takes the `https` request & passes it as | |

| * `http` only to the servers, which is tricking Tus server, | |

| * so, we have to change it back here. | |

| * | |

| * @param type $response | |

| */ | |

| private function _fixNonSecureLocationHeader(&$response) { | |

| $location = $response->headers->get('location'); | |

| if (!empty($location)) {// `location` is sent to the client only the 1st time | |

| $location = preg_replace("/^http:/i", "https:", $location); | |

| $response->headers->set('location', $location); | |

| } | |

| } | |

| } |

Conclusion

Before Tus I always thought that uploading files has only one traditional way, and no one can touch it, to the extent that I felt that it is pointless even searching for a solution, but never stop at your own boundaries, break them & go beyond, and you will reach new destinations you never thought possible.

Now, uploading large files became dead simple, & I really want to thank the team behind Tus.io for what they did.